OpenCV实战之实现手势虚拟缩放效果

夏天是冰红茶 人气:00、项目介绍

本篇将会以HandTrackingModule为模块,这里的模块与之前的有所不同,请按照本篇为准,前面的HandTrackingModule不足以完成本项目,本篇将会通过手势对本人的博客海报进行缩放,具体效果可以看下面的效果展示。

1、项目展示

2、项目搭建

首先在一个文件夹下建立HandTrackingModule.py文件以及gesture_zoom.py,以及一张图片,你可以按照你的喜好选择,建议尺寸不要过大。

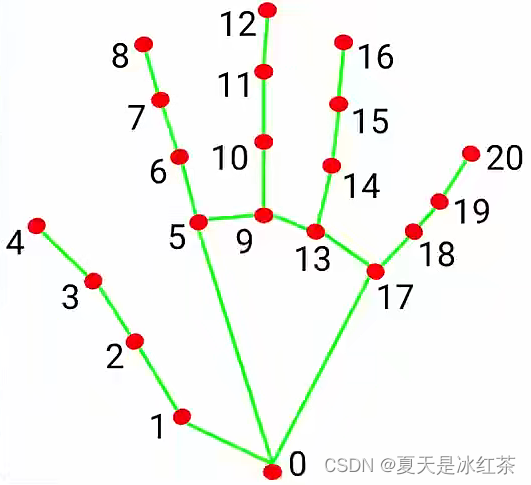

在这里用到了食指的索引8,可以完成左右手食指的手势进行缩放。

3、项目的代码与讲解

HandTrackingModule.py:

import cv2

import mediapipe as mp

import math

class handDetector:

def __init__(self, mode=False, maxHands=2, detectionCon=0.5, minTrackCon=0.5):

self.mode = mode

self.maxHands = maxHands

self.detectionCon = detectionCon

self.minTrackCon = minTrackCon

self.mpHands = mp.solutions.hands

self.hands = self.mpHands.Hands(static_image_mode=self.mode, max_num_hands=self.maxHands,

min_detection_confidence=self.detectionCon,

min_tracking_confidence=self.minTrackCon)

self.mpDraw = mp.solutions.drawing_utils

self.tipIds = [4, 8, 12, 16, 20]

self.fingers = []

self.lmList = []

def findHands(self, img, draw=True, flipType=True):

imgRGB = cv2.cvtColor(img, cv2.COLOR_BGR2RGB)

self.results = self.hands.process(imgRGB)

allHands = []

h, w, c = img.shape

if self.results.multi_hand_landmarks:

for handType, handLms in zip(self.results.multi_handedness, self.results.multi_hand_landmarks):

myHand = {}

## lmList

mylmList = []

xList = []

yList = []

for id, lm in enumerate(handLms.landmark):

px, py, pz = int(lm.x * w), int(lm.y * h), int(lm.z * w)

mylmList.append([px, py])

xList.append(px)

yList.append(py)

## bbox

xmin, xmax = min(xList), max(xList)

ymin, ymax = min(yList), max(yList)

boxW, boxH = xmax - xmin, ymax - ymin

bbox = xmin, ymin, boxW, boxH

cx, cy = bbox[0] + (bbox[2] // 2), \

bbox[1] + (bbox[3] // 2)

myHand["lmList"] = mylmList

myHand["bbox"] = bbox

myHand["center"] = (cx, cy)

if flipType:

if handType.classification[0].label == "Right":

myHand["type"] = "Left"

else:

myHand["type"] = "Right"

else:

myHand["type"] = handType.classification[0].label

allHands.append(myHand)

## draw

if draw:

self.mpDraw.draw_landmarks(img, handLms,

self.mpHands.HAND_CONNECTIONS)

cv2.rectangle(img, (bbox[0] - 20, bbox[1] - 20),

(bbox[0] + bbox[2] + 20, bbox[1] + bbox[3] + 20),

(255, 0, 255), 2)

cv2.putText(img, myHand["type"], (bbox[0] - 30, bbox[1] - 30), cv2.FONT_HERSHEY_PLAIN,

2, (255, 0, 255), 2)

if draw:

return allHands, img

else:

return allHands

def fingersUp(self, myHand):

myHandType = myHand["type"]

myLmList = myHand["lmList"]

if self.results.multi_hand_landmarks:

fingers = []

# Thumb

if myHandType == "Right":

if myLmList[self.tipIds[0]][0] > myLmList[self.tipIds[0] - 1][0]:

fingers.append(1)

else:

fingers.append(0)

else:

if myLmList[self.tipIds[0]][0] < myLmList[self.tipIds[0] - 1][0]:

fingers.append(1)

else:

fingers.append(0)

# 4 Fingers

for id in range(1, 5):

if myLmList[self.tipIds[id]][1] < myLmList[self.tipIds[id] - 2][1]:

fingers.append(1)

else:

fingers.append(0)

return fingers

def findDistance(self, p1, p2, img=None):

x1, y1 = p1

x2, y2 = p2

cx, cy = (x1 + x2) // 2, (y1 + y2) // 2

length = math.hypot(x2 - x1, y2 - y1)

info = (x1, y1, x2, y2, cx, cy)

if img is not None:

cv2.circle(img, (x1, y1), 15, (255, 0, 255), cv2.FILLED)

cv2.circle(img, (x2, y2), 15, (255, 0, 255), cv2.FILLED)

cv2.line(img, (x1, y1), (x2, y2), (255, 0, 255), 3)

cv2.circle(img, (cx, cy), 15, (255, 0, 255), cv2.FILLED)

return length, info, img

else:

return length, info

def main():

cap = cv2.VideoCapture(0)

detector = handDetector(detectionCon=0.8, maxHands=2)

while True:

# Get image frame

success, img = cap.read()

# Find the hand and its landmarks

hands, img = detector.findHands(img) # with draw

# hands = detector.findHands(img, draw=False) # without draw

if hands:

# Hand 1

hand1 = hands[0]

lmList1 = hand1["lmList"] # List of 21 Landmark points

bbox1 = hand1["bbox"] # Bounding box info x,y,w,h

centerPoint1 = hand1['center'] # center of the hand cx,cy

handType1 = hand1["type"] # Handtype Left or Right

fingers1 = detector.fingersUp(hand1)

if len(hands) == 2:

# Hand 2

hand2 = hands[1]

lmList2 = hand2["lmList"] # List of 21 Landmark points

bbox2 = hand2["bbox"] # Bounding box info x,y,w,h

centerPoint2 = hand2['center'] # center of the hand cx,cy

handType2 = hand2["type"] # Hand Type "Left" or "Right"

fingers2 = detector.fingersUp(hand2)

# Find Distance between two Landmarks. Could be same hand or different hands

length, info, img = detector.findDistance(lmList1[8][0:2], lmList2[8][0:2], img) # with draw

# length, info = detector.findDistance(lmList1[8], lmList2[8]) # with draw

# Display

cv2.imshow("Image", img)

cv2.waitKey(1)

if __name__ == "__main__":

main()gesture_zoom.py :

import cv2

import mediapipe as mp

import time

import HandTrackingModule as htm

startDist = None

scale = 0

cx, cy = 500,200

wCam, hCam = 1280,720

pTime = 0

cap = cv2.VideoCapture(0)

cap.set(3, wCam)

cap.set(4, hCam)

cap.set(10,150)

detector = htm.handDetector(detectionCon=0.75)

while 1:

success, img = cap.read()

handsimformation,img=detector.findHands(img)

img1 = cv2.imread("1.png")

# img[0:360, 0:260] = img1

if len(handsimformation)==2:

# print(detector.fingersUp(handsimformation[0]),detector.fingersUp(handsimformation[1]))

#detector.fingersUp(handimformation[0]右手

if detector.fingersUp(handsimformation[0]) == [1, 1, 1, 0, 0] and \

detector.fingersUp(handsimformation[1]) == [1, 1, 1 ,0, 0]:

lmList1 = handsimformation[0]['lmList']

lmList2 = handsimformation[1]['lmList']

if startDist is None:

#lmList1[8],lmList2[8]右、左手指尖

# length,info,img=detector.findDistance(lmList1[8],lmList2[8], img)

length, info, img = detector.findDistance(handsimformation[0]["center"], handsimformation[1]["center"], img)

startDist=length

length, info, img = detector.findDistance(handsimformation[0]["center"], handsimformation[1]["center"], img)

# length, info, img = detector.findDistance(lmList1[8], lmList2[8], img)

scale=int((length-startDist)//2)

cx, cy=info[4:]

print(scale)

else:

startDist=None

try:

h1, w1, _ = img1.shape

newH, newW = ((h1 + scale) // 2) * 2, ((w1 + scale) // 2) * 2

img1 = cv2.resize(img1, (newW, newH))

img[cy-newH//2:cy+ newH//2, cx-newW//2:cx+newW//2] = img1

except:

pass

#################打印帧率#####################

cTime = time.time()

fps = 1 / (cTime - pTime)

pTime = cTime

cv2.putText(img, f'FPS: {int(fps)}', (40, 50), cv2.FONT_HERSHEY_COMPLEX,

1, (100, 0, 255), 3)

cv2.imshow("image",img)

k=cv2.waitKey(1)

if k==27:

break

前面的类模块,我不做过多的讲解,它的新添加功能,我会在讲解主文件的时候提到。

1.首先,导入我们需要的模块,第一步先编写打开摄像头的代码,确保摄像头的正常,并调节好窗口的设置——长、宽、亮度,并且用htm(HandTrackingModule的缩写,后面都是此意)handDetector调整置信度,让我们检测到手更准确。

2.其次,用findHands的得到手的landmark,我所设定的手势是左右手的大拇指、食指、中指高于其他四指,也就是这六根手指竖起,我们按照[1, 1, 1, 0, 0],[1, 1, 1, 0, 0]来设定,如果你不能确定,请解除这里的代码;

#print(detector.fingersUp(handsimformation[0]),detector.fingersUp(handsimformation[1]))

3.然后,在这里有两个handsimformation[0]['lmList'],handsimformation[0]["center"],分别代表我要取食指,和手掌中心点,那么展示的时候是用的中心点,可以按照个人的喜好去选择手掌的索引,startDist=None表示为没有检测到的手时的起始长度,而经过每次迭代后,获得的距离length-起始长度,如果我增大手的距离,我就能得到一个较大的scale,由于打印的scale太大,我不希望它变化太快,所以做了二分后取整,如果得到的是一个负值,那么就缩小图片,那么我们没有检测到手时,就要令startDist=None。

4.之后来看,info = (x1, y1, x2, y2, cx, cy),根据索引得到中心值,然后,我们来获取现在海报的大小,然后加上我们scale,实现动态的缩放,但在这里要注意,这里进行了整出2,在乘以2的操作,如果是参数是偶数,我们无需理会,但如果遇到了奇数就会出现少一个像素点的问题,比如,值为9,整除2后得到的为4,4+4=8<9,所以为了确保正确,加了这一步。加入try...except语句是因为图像超出窗口时发出会发出警告,起到超出时此代码将不起作用,回到窗口时,可以继续操作。

5.最后,打印出我们的帧率

加载全部内容