缺失数据Bootstrap与Jackknife方法 R语言学习笔记缺失数据的Bootstrap与Jackknife方法

Kanny广小隶 人气:0一、题目

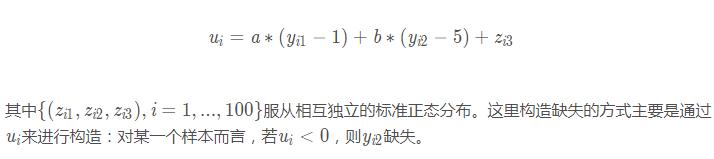

下面再加入缺失的情况来继续深入探讨,同样还是如习题1.6的构造方式来加入缺失值,其中a=2, b = 0

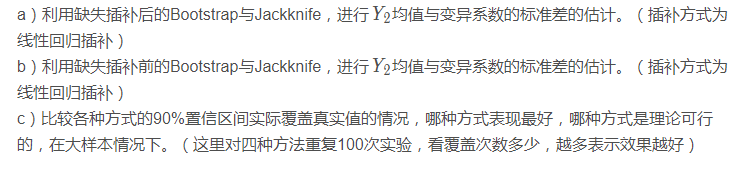

我们将进行如下几种操作:

二、解答

a)Bootstrap与Jackknife进行估计

首先构建生成数据函数。

# 生成数据

# 生成数据

GenerateData <- function(a = 0, b = 0) {

y <- matrix(nrow = 3, ncol = 100)

z <- matrix(rnorm(300), nrow = 3)

y[1, ] <- 1 + z[1, ]

y[2, ] <- 5 + 2 * z[1, ] + z[2, ]

u <- a * (y[1, ] - 1) + b * (y[2, ] - 5) + z[3, ]

# m2 <- 1 * (u < 0)

y[3, ] <- y[2, ]

y[3, u < 0] <- NA

dat_comp <- data.frame(y1 = y[1, ], y2 = y[2, ])

dat_incomp <- data.frame(y1 = y[1, ], y2 = y[3, ])

# dat_incomp <- na.omit(dat_incomp)

return(list(dat_comp = dat_comp, dat_incomp = dat_incomp))

}

Bootstrap与Jackknife的函数:

Bootstrap1 <- function(Y, B = 200, fun) {

Y_len <- length(Y)

mat_boots <- matrix(sample(Y, Y_len * B, replace = T), nrow = B, ncol = Y_len)

statis_boots <- apply(mat_boots, 1, fun)

boots_mean <- mean(statis_boots)

boots_sd <- sd(statis_boots)

return(list(mean = boots_mean, sd = boots_sd))

}

Jackknife1 <- function(Y, fun) {

Y_len <- length(Y)

mat_jack <- sapply(1:Y_len, function(i) Y[-i])

redu_samp <- apply(mat_jack, 2, fun)

jack_mean <- mean(redu_samp)

jack_sd <- sqrt(((Y_len - 1) ^ 2 / Y_len) * var(redu_samp))

return(list(mean = jack_mean, sd = jack_sd))

}

进行重复试验所需的函数:

RepSimulation <- function(seed = 2018, fun) {

set.seed(seed)

dat <- GenerateData()

dat_comp_y2 <- dat$dat_comp$y2

boots_sd <- Bootstrap1(dat_comp_y2, B = 200, fun)$sd

jack_sd <- Jackknife1(dat_comp_y2, fun)$sd

return(c(boots_sd = boots_sd, jack_sd = jack_sd))

}

下面重复100次实验进行 Y2的均值与变异系数标准差的估计:

nrep <- 100 ## 均值 fun = mean mat_boots_jack <- sapply(1:nrep, RepSimulation, fun) apply(mat_boots_jack, 1, function(x) paste(round(mean(x), 3), '±', round(sd(x), 3)))

## 变异系数 fun = function(x) sd(x) / mean(x) mat_boots_jack <- sapply(1:nrep, RepSimulation, fun) apply(mat_boots_jack, 1, function(x) paste(round(mean(x), 3), '±', round(sd(x), 3)))

从上面可以发现,Bootstrap与Jackknife两者估计结果较为相近,其中对均值标准差的估计,Jackknife的方差更小。这其实较为符合常识:Jackknife估计每次只取出一个样本,用剩下的样本来作为样本整体;而Bootstrap每次都会比较随机地重抽样,随机性相对较高,所以重复100次模拟实验,导致其方差相对较大。

下面我们用计算公式来进行推导。

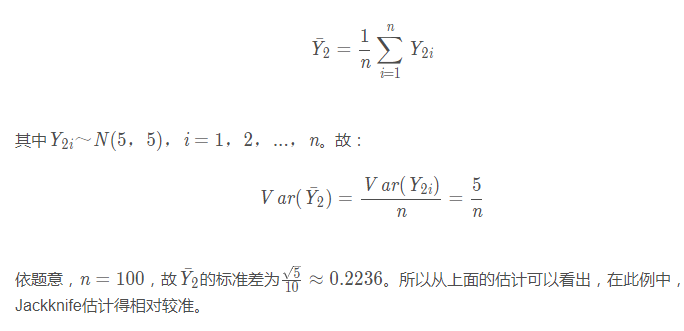

b)均值与变异系数(大样本)的标准差解析式推导与计算

均值

变异系数(大样本近似)

## 变异系数

sd(sapply(1:10000, function(x) {

set.seed(x)

dat <- GenerateData(a = 0, b = 0)

sd(dat$dat_comp$y2) / mean(dat$dat_comp$y2)

}))

变异系数大样本近似值为:0.03717648,说明前面的Bootstrap与Jackknife两种方法估计的都较为准确。

c)缺失插补后的Bootstrap与Jackknife

构造线性填补的函数,并进行线性填补。

DatImputation <- function(dat_incomp) {

dat_imp <- dat_incomp

lm_model = lm(y2 ~ y1, data = na.omit(dat_incomp))

# 找出y2缺失对应的那部分data

na_ind = is.na(dat_incomp$y2)

na_dat = dat_incomp[na_ind, ]

# 将缺失数据进行填补

dat_imp[na_ind, 'y2'] = predict(lm_model, na_dat)

return(dat_imp)

}

dat <- GenerateData(a = 2, b = 0)

dat_imp <- DatImputation(dat$dat_incomp)

fun = mean Bootstrap1(dat_imp$y2, B = 200, fun)$sd

Jackknife1(dat_imp$y2, fun)$sd

fun = function(x) sd(x) / mean(x) Bootstrap1(dat_imp$y2, B = 200, fun)$sd

Jackknife1(dat_imp$y2, fun)$sd

Bootstrap与Jackknife的填补结果,很大一部分是由于数据的缺失会造成距离真实值较远。但单从两种方法估计出来的值比较接近。

c)缺失插补前的Bootstrap与Jackknife

先构建相关的函数:

Array2meancv <- function(j, myarray) {

dat_incomp <- as.data.frame(myarray[, j, ])

names(dat_incomp) <- c('y1', 'y2')

dat_imp <- DatImputation(dat_incomp)

y2_mean <- mean(dat_imp$y2)

y2_cv <- sd(dat_imp$y2) / y2_mean

return(c(mean = y2_mean, cv = y2_cv))

}

Bootstrap_imp <- function(dat_incomp, B = 200) {

n <- nrow(dat_incomp)

array_boots <- array(dim = c(n, B, 2))

mat_boots_ind <- matrix(sample(1:n, n * B, replace = T), nrow = B, ncol = n)

array_boots[, , 1] <- sapply(1:B, function(i) dat_incomp$y1[mat_boots_ind[i, ]])

array_boots[, , 2] <- sapply(1:B, function(i) dat_incomp$y2[mat_boots_ind[i, ]])

mean_cv_imp <- sapply(1:B, Array2meancv, array_boots)

boots_imp_mean <- apply(mean_cv_imp, 1, mean)

boots_imp_sd <- apply(mean_cv_imp, 1, sd)

return(list(mean = boots_imp_mean, sd = boots_imp_sd))

}

Jackknife_imp <- function(dat_incomp) {

n <- nrow(dat_incomp)

array_jack <- array(dim = c(n - 1, n, 2))

array_jack[, , 1] <- sapply(1:n, function(i) dat_incomp[-i, 'y1'])

array_jack[, , 2] <- sapply(1:n, function(i) dat_incomp[-i, 'y2'])

mean_cv_imp <- sapply(1:n, Array2meancv, array_jack)

jack_imp_mean <- apply(mean_cv_imp, 1, mean)

jack_imp_sd <- apply(mean_cv_imp, 1, function(x) sqrt(((n - 1) ^ 2 / n) * var(x)))

return(list(mean = jack_imp_mean, sd = jack_imp_sd))

}

然后看看两种方式估计出来的结果:

Bootstrap_imp(dat$dat_incomp)$sd

Jackknife_imp(dat$dat_incomp)$sd

缺失插补前进行Bootstrap与Jackknife也还是有一定的误差,标准差都相对更大,表示波动会比较大。具体表现情况下面我们多次重复模拟实验,通过90%置信区间来看各个方法的优劣。

d)比较各种方式的90%置信区间情况(重复100次实验)

RepSimulationCI <- function(seed = 2018, stats = 'mean') {

mean_true <- 5

cv_true <- sqrt(5) / 5

myjudge <- function(x, value) {

return(ifelse((x$mean - qnorm(0.95) * x$sd < value) & (x$mean + qnorm(0.95) * x$sd > value), 1, 0))

}

if(stats == 'mean') {

fun = mean

value = mean_true

} else if(stats == 'cv') {

fun = function(x) sd(x) / mean(x)

value = cv_true

}

set.seed(seed)

boots_after_ind <- boots_before_ind <- jack_after_ind <- jack_before_ind <- 0

dat <- GenerateData(a = 2, b = 0)

dat_incomp <- dat$dat_incomp

# after imputation

dat_imp <- DatImputation(dat_incomp)

boots_after <- Bootstrap1(dat_imp$y2, B = 200, fun)

boots_after_ind <- myjudge(boots_after, value)

jack_after <- Jackknife1(dat_imp$y2, fun)

jack_after_ind <- myjudge(jack_after, value)

# before imputation

boots_before <- Bootstrap_imp(dat_incomp)

jack_before <- Jackknife_imp(dat_incomp)

if(stats == 'mean') {

boots_before$mean <- boots_before$mean[1]

boots_before$sd <- boots_before$sd[1]

jack_before$mean <- jack_before$mean[1]

jack_before$sd <- jack_before$sd[1]

} else if(stats == 'cv') {

boots_before$mean <- boots_before$mean[2]

boots_before$sd <- boots_before$sd[2]

jack_before$mean <- jack_before$mean[2]

jack_before$sd <- jack_before$sd[2]

}

boots_before_ind <- myjudge(boots_before, value)

jack_before_ind <- myjudge(jack_before, value)

return(c(boots_after = boots_after_ind,

boots_before = boots_before_ind,

jack_after = jack_after_ind,

jack_before = jack_before_ind))

}

重复100次实验,均值情况:

nrep <- 100

result_mean <- apply(sapply(1:nrep, RepSimulationCI, 'mean'), 1, sum)

names(result_mean) <- c('boots_after', 'boots_before', 'jack_after', 'jack_before')

result_mean

变异系数情况:

result_cv <- apply(sapply(1:nrep, RepSimulationCI, 'cv'), 1, sum)

names(result_cv) <- c('boots_after', 'boots_before', 'jack_after', 'jack_before')

result_cv

上面的数字越表示90%置信区间覆盖真实值的个数,数字越大表示覆盖的次数越多,也就说明该方法会相对更好。

填补之前进行Bootstrap或Jackknife

无论是均值还是变异系数,通过模拟实验都能看出,在填补之前进行Bootstrap或Jackknife,其估计均会远优于在填补之后进行Bootstrap或Jackknife。而具体到Bootstrap或Jackknife,这两种方法相差无几。

填补之后进行Bootstrap或Jackknife

在填补之后进行Bootstrap或Jackknife,效果都会很差,其实仔细思考后也能够理解,本身缺失了近一半的数据,然后填补会带来很大的偏差,此时我们再从中抽样,有很大可能抽出来的绝大多数都是原本填补的有很大偏差的样本,这样估计就会更为不准了。

当然,从理论上说,填补之前进行Bootstrap或Jackknife是较为合理的,这样对每个Bootstrap或Jackknife样本,都可以用当前的观测值去填补当前的缺失值,这样每次填补可能花费的时间将对较长,但实际却更有效。

加载全部内容