Docker容器Patroni 深入浅析Docker容器中的Patroni

PostgreSQLChina 人气:0上一篇文章向大家介绍了Repmgr的搭建过程,实现了自动切换,今天将向大家介绍,如何搭建容器下的Patroni集群环境,Patroni作为开箱即用PG高可用工具,越来越多的被各个厂商用于云环境下使用。

patroni基本架构如图所示:

etcd作为分布式注册中心、进行集群选主工作;vip-manager为主节点设置漂移IP;patroni负责引导集群的创建、运行和管理工作,并可以使用patronictl来进行终端访问。

具体流程:

1、首先启动etcd集群,本例中etcd数量为3个。

2、检测etcd集群状态健康后,启动patroni并竞争选主,其他跟随节点进行数据同步过程。

3、启动vip-manager,通过访问etcd集群中/ S E R V I C E N A M E / {SERVICE_NAME}/ SERVICENAME/{CLUSTER_NAME}/leader键中的具体值,判断当前节点是否为主节点ip,如果是则为该节点设置vip,提供对外读写服务。

注:建议真实环境下将etcd部署到独立容器上,对外提供服务。

创建镜像

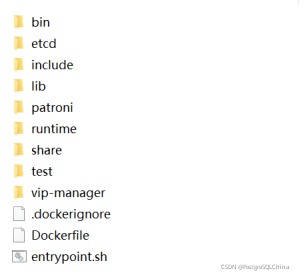

文件结构

其中Dockerfile为镜像主文件,docker服务通过该文件在本地仓库创建镜像;entrypoint.sh为容器入口文件,负责业务逻辑的处理;function为执行业务方法的入口文件,负责启动etcd,监控etcd集群状态、启动patroni和vip-manager;generatefile为整个容器生成对应的配置文件,包括etcd、patroni及vip-mananger。

目录结构大致如图所示:

注:数据库安装包和patroni安装包请自行构建。

DockerFile

FROM centos:7

MAINTAINER wangzhibin <wangzhibin>

ENV USER="postgresql" \

PASSWORD=123456 \

GROUP=postgresql

RUN useradd ${USER} \

&& chown -R ${USER}:${GROUP} /home/${USER} \

&& yum -y update && yum install -y iptables sudo net-tools iproute openssh-server openssh-clients which vim sudo crontabs

#安装etcd

COPY etcd/etcd /usr/sbin

COPY etcd/etcdctl /usr/sbin

#安装database

COPY lib/ /home/${USER}/lib

COPY include/ /home/${USER}/include

COPY share/ /home/${USER}/share

COPY bin/ /home/${USER}/bin/

COPY patroni/ /home/${USER}/patroni

#安装vip-manager

COPY vip-manager/vip-manager /usr/sbin

#安装执行脚本

COPY runtime/ /home/${USER}/runtime

COPY entrypoint.sh /sbin/entrypoint.sh

#设置环境变量

ENV LD_LIBRARY_PATH /home/${USER}/lib

ENV PATH /home/${USER}/bin:$PATH

ENV ETCDCTL_API=3

#安装Patroni

RUN yum -y install epel-release python-devel && yum -y install python-pip \

&& pip install /home/${USER}/patroni/1/pip-20.3.3.tar.gz \

&& pip install /home/${USER}/patroni/1/psycopg2-2.8.6-cp27-cp27mu-linux_x86_64.whl \

&& pip install --no-index --find-links=/home/${USER}/patroni/2/ -r /home/${USER}/patroni/2/requirements.txt \

&& pip install /home/${USER}/patroni/3/patroni-2.0.1-py2-none-any.whl

#修改执行权限

RUN chmod 755 /sbin/entrypoint.sh \

&& mkdir /home/${USER}/etcddata \

&& chown -R ${USER}:${GROUP} /home/${USER} \

&& echo 'root:root123456' | chpasswd \

&& chmod 755 /sbin/etcd \

&& chmod 755 /sbin/etcdctl \

&& chmod 755 /sbin/vip-manager

#设置Sudo

RUN chmod 777 /etc/sudoers \

&& sed -i '/## Allow root to run any commands anywhere/a '${USER}' ALL=(ALL) NOPASSWD:ALL' /etc/sudoers \

&& chmod 440 /etc/sudoers

#切换用户

USER ${USER}

#切换工作目录

WORKDIR /home/${USER}

#启动入口程序

CMD ["/bin/bash", "/sbin/entrypoint.sh"]

entrypoint.sh

#!/bin/bash

set -e

# shellcheck source=runtime/functions

source "/home/${USER}/runtime/function"

configure_patroni

function

#!/bin/bash

set -e

source /home/${USER}/runtime/env-defaults

source /home/${USER}/runtime/generatefile

PG_DATADIR=/home/${USER}/pgdata

PG_BINDIR=/home/${USER}/bin

configure_patroni()

{

#生成配置文件

generate_etcd_conf

generate_patroni_conf

generate_vip_conf

#启动etcd

etcdcount=${ETCD_COUNT}

count=0

ip_temp=""

array=(${HOSTLIST//,/ })

for host in ${array[@]}

do

ip_temp+="http://${host}:2380,"

done

etcd --config-file=/home/${USER}/etcd.yml >/home/${USER}/etcddata/etcd.log 2>&1 &

while [ $count -lt $etcdcount ]

do

line=(`etcdctl --endpoints=${ip_temp%?} endpoint health -w json`)

count=`echo $line | awk -F"\"health\":true" '{print NF-1}'`

echo "waiting etcd cluster"

sleep 5

done

#启动patroni

patroni /home/${USER}/postgresql.yml > /home/${USER}/patroni/patroni.log 2>&1 &

#启动vip-manager

sudo vip-manager --config /home/${USER}/vip.yml

}

generatefile

#!/bin/bash

set -e

HOSTNAME="`hostname`"

hostip=`ping ${HOSTNAME} -c 1 -w 1 | sed '1{s/[^(]*(//;s/).*//;q}'`

#generate etcd

generate_etcd_conf()

{

echo "name : ${HOSTNAME}" >> /home/${USER}/etcd.yml

echo "data-dir: /home/${USER}/etcddata" >> /home/${USER}/etcd.yml

echo "listen-client-urls: http://0.0.0.0:2379" >> /home/${USER}/etcd.yml

echo "advertise-client-urls: http://${hostip}:2379" >> /home/${USER}/etcd.yml

echo "listen-peer-urls: http://0.0.0.0:2380" >> /home/${USER}/etcd.yml

echo "initial-advertise-peer-urls: http://${hostip}:2380" >> /home/${USER}/etcd.yml

ip_temp="initial-cluster: "

array=(${HOSTLIST//,/ })

for host in ${array[@]}

do

ip_temp+="${host}=http://${host}:2380,"

done

echo ${ip_temp%?} >> /home/${USER}/etcd.yml

echo "initial-cluster-token: etcd-cluster-token" >> /home/${USER}/etcd.yml

echo "initial-cluster-state: new" >> /home/${USER}/etcd.yml

}

#generate patroni

generate_patroni_conf()

{

echo "scope: ${CLUSTER_NAME}" >> /home/${USER}/postgresql.yml

echo "namespace: /${SERVICE_NAME}/ " >> /home/${USER}/postgresql.yml

echo "name: ${HOSTNAME} " >> /home/${USER}/postgresql.yml

echo "restapi: " >> /home/${USER}/postgresql.yml

echo " listen: ${hostip}:8008 " >> /home/${USER}/postgresql.yml

echo " connect_address: ${hostip}:8008 " >> /home/${USER}/postgresql.yml

echo "etcd: " >> /home/${USER}/postgresql.yml

echo " host: ${hostip}:2379 " >> /home/${USER}/postgresql.yml

echo " username: ${ETCD_USER} " >> /home/${USER}/postgresql.yml

echo " password: ${ETCD_PASSWD} " >> /home/${USER}/postgresql.yml

echo "bootstrap: " >> /home/${USER}/postgresql.yml

echo " dcs: " >> /home/${USER}/postgresql.yml

echo " ttl: 30 " >> /home/${USER}/postgresql.yml

echo " loop_wait: 10 " >> /home/${USER}/postgresql.yml

echo " retry_timeout: 10 " >> /home/${USER}/postgresql.yml

echo " maximum_lag_on_failover: 1048576 " >> /home/${USER}/postgresql.yml

echo " postgresql: " >> /home/${USER}/postgresql.yml

echo " use_pg_rewind: true " >> /home/${USER}/postgresql.yml

echo " use_slots: true " >> /home/${USER}/postgresql.yml

echo " parameters: " >> /home/${USER}/postgresql.yml

echo " initdb: " >> /home/${USER}/postgresql.yml

echo " - encoding: UTF8 " >> /home/${USER}/postgresql.yml

echo " - data-checksums " >> /home/${USER}/postgresql.yml

echo " pg_hba: " >> /home/${USER}/postgresql.yml

echo " - host replication ${USER} 0.0.0.0/0 md5 " >> /home/${USER}/postgresql.yml

echo " - host all all 0.0.0.0/0 md5 " >> /home/${USER}/postgresql.yml

echo "postgresql: " >> /home/${USER}/postgresql.yml

echo " listen: 0.0.0.0:5432 " >> /home/${USER}/postgresql.yml

echo " connect_address: ${hostip}:5432 " >> /home/${USER}/postgresql.yml

echo " data_dir: ${PG_DATADIR} " >> /home/${USER}/postgresql.yml

echo " bin_dir: ${PG_BINDIR} " >> /home/${USER}/postgresql.yml

echo " pgpass: /tmp/pgpass " >> /home/${USER}/postgresql.yml

echo " authentication: " >> /home/${USER}/postgresql.yml

echo " replication: " >> /home/${USER}/postgresql.yml

echo " username: ${USER} " >> /home/${USER}/postgresql.yml

echo " password: ${PASSWD} " >> /home/${USER}/postgresql.yml

echo " superuser: " >> /home/${USER}/postgresql.yml

echo " username: ${USER} " >> /home/${USER}/postgresql.yml

echo " password: ${PASSWD} " >> /home/${USER}/postgresql.yml

echo " rewind: " >> /home/${USER}/postgresql.yml

echo " username: ${USER} " >> /home/${USER}/postgresql.yml

echo " password: ${PASSWD} " >> /home/${USER}/postgresql.yml

echo " parameters: " >> /home/${USER}/postgresql.yml

echo " unix_socket_directories: '.' " >> /home/${USER}/postgresql.yml

echo " wal_level: hot_standby " >> /home/${USER}/postgresql.yml

echo " max_wal_senders: 10 " >> /home/${USER}/postgresql.yml

echo " max_replication_slots: 10 " >> /home/${USER}/postgresql.yml

echo "tags: " >> /home/${USER}/postgresql.yml

echo " nofailover: false " >> /home/${USER}/postgresql.yml

echo " noloadbalance: false " >> /home/${USER}/postgresql.yml

echo " clonefrom: false " >> /home/${USER}/postgresql.yml

echo " nosync: false " >> /home/${USER}/postgresql.yml

}

#........ 省略部分内容

构建镜像

docker build -t patroni .

运行镜像

运行容器节点1:

docker run --privileged --name patroni1 -itd --hostname patroni1 --net my_net3 --restart always --env ‘CLUSTER_NAME=patronicluster' --env ‘SERVICE_NAME=service' --env ‘ETCD_USER=etcduser' --env ‘ETCD_PASSWD=etcdpasswd' --env ‘PASSWD=zalando' --env ‘HOSTLIST=patroni1,patroni2,patroni3' --env ‘VIP=172.22.1.88' --env ‘NET_DEVICE=eth0' --env ‘ETCD_COUNT=3' patroni

运行容器节点2:

docker run --privileged --name patroni2 -itd --hostname patroni2 --net my_net3 --restart always --env ‘CLUSTER_NAME=patronicluster' --env ‘SERVICE_NAME=service' --env ‘ETCD_USER=etcduser' --env ‘ETCD_PASSWD=etcdpasswd' --env ‘PASSWD=zalando' --env ‘HOSTLIST=patroni1,patroni2,patroni3' --env ‘VIP=172.22.1.88' --env ‘NET_DEVICE=eth0' --env ‘ETCD_COUNT=3' patroni

运行容器节点3:

docker run --privileged --name patroni3 -itd --hostname patroni3 --net my_net3 --restart always --env ‘CLUSTER_NAME=patronicluster' --env ‘SERVICE_NAME=service' --env ‘ETCD_USER=etcduser' --env ‘ETCD_PASSWD=etcdpasswd' --env ‘PASSWD=zalando' --env ‘HOSTLIST=patroni1,patroni2,patroni3' --env ‘VIP=172.22.1.88' --env ‘NET_DEVICE=eth0' --env ‘ETCD_COUNT=3' patroni

总结

本操作过程仅限于试验环境,为了演示etcd+patroni+vipmanager整体的容器化搭建。在真实环境下,etcd应该部署在不同容器下,形成独立的分布式集群,并且PG的存储应该映射到本地磁盘或网络磁盘,另外容器集群的搭建尽量使用编排工具,例如docker-compose、docker-warm或者Kubernetes等。

附图

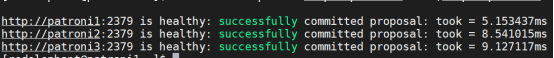

etcd集群状态如图:

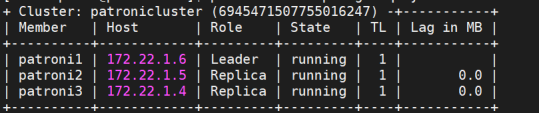

patroni集群状态如图:

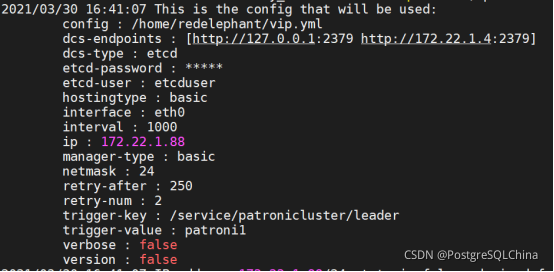

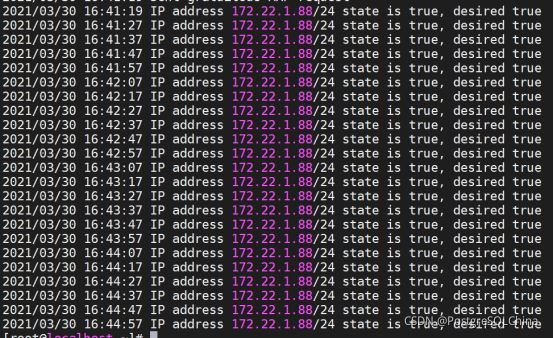

vip-manager状态如图:

加载全部内容